Tricentis Morning Show – Episode 9

In this session we take you on a journey. The customer journey to...

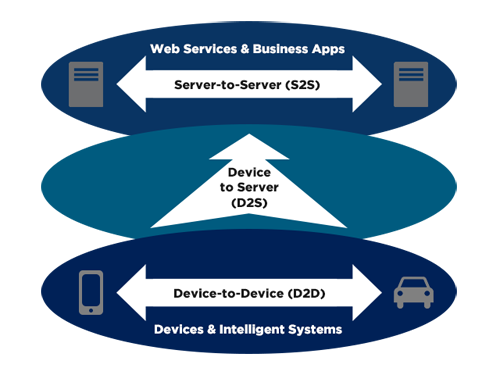

Does it seem a bit weird that machines are having more conversations than human beings at this point? Well, this disparity is about to get even more lopsided as the Internet of Things (IoT) permeates the world. Devices will need to communicate with other devices (D2D), collected device data will need to be sent to the server infrastructure (D2S), and then the server infrastructure will need to share this device data (S2S), perhaps passing it on to programs, devices, or people.

Unless you’ve managed to avoid technology websites over the past several years, you know that the Internet of Things is a big deal. Organizations across the globe are determining what the IoT will mean for their business and how to prepare for the rapid increase in connected “things.” For performance testing professionals, the IoT can seem even more daunting as it introduces considerable change that may end up affecting long-standing testing strategies.

We’d like to address these concerns and discuss the ways in which load and performance testers can confidently approach the testing of IoT applications within their organization.

It’s completely understandable for performance testers to worry about how the Internet of Things will affect their jobs and processes. However, there really isn’t much to fear. Performance testing IoT applications is not all that different from testing web and mobile applications. Testers will just need to be aware of certain aspects of IoT devices that differ from web and mobile apps.

For example, “things” maintain a constant connection with the server. This results in a different usage pattern than that of a user or mobile device. Chances are, you’ll need to increase capacity in order to handle these kinds of connections.

As with web and mobile applications, the IoT will require strong testing capabilities in order to guarantee that the performance of the services meets end-user requirements in addition to the SLAs between service providers and consumers. To put it simply, ensuring IoT application performance comes down to properly testing the network communication and internal computation.

That being said, the IoT does still introduce certain challenges for performance testers that will need to be addressed.

The number one challenge testers will face when dealing with the IoT is simply an added layer of complexity. Performance testers will need to account for more devices, whether it’s a smartphone someone uses to control a “thing” or the “thing” itself. Another challenge will be determining how to record messages from the connected objects. By now, most load and performance testers are comfortable recording traffic from web browsers, mobile devices ,and the like. However, testing objects with no way to change the settings of said objects may prove difficult.

Different usage conditions also introduce a challenge for IoT performance testers. The reliability of an end user’s internet connection will play a large role in determining the performance of an application, so it’s essential to verify that data will still be detected and properly stored in the event of a disruption in service.

Testers will need to consider factors like network bandwidth, latency, packet loss, a large number of concurrent users, etc. — just as they would with a web or mobile application — because the implications when these factors impact performance are much greater. Instead of a website or mobile application crash, a physical commodity may not respond to a user’s command.

To effectively account for the number of devices, regulations, and networks communicating in real time, a foolproof testing strategy is required.

So how can testers address these challenges while working towards the development of a foolproof testing strategy? First off, teams will need to prioritize their test cases. Here comes the good news: you don’t need to test everything right away. Begin by identifying the areas that will take up the most testing time (i.e., your critical business transactions).

Performance problems with some transactions may not pose as big of a risk to a brand’s reputation or revenue, but for others, seamless performance is absolutely critical. As an example, we’ll compare the consequences of performance problems for two different application scenarios.

Scenario 1: Connected refrigerator

Let’s say an application is designed to monitor a connected refrigerator. It will keep track of how the engine is behaving, how the light is working, if the door is closing properly, and so on. These metrics will need to be sent to a data center. If the data center fails to receive some of these messages due to drops in performance, chances are it won’t have a large impact on your business. This example illustrates the usage of the IoT to simply monitor the health of manufactured objects in order to provide a better product in the future.

Scenario 2: Car manufacturing assembly line

An application is integrated into each machine that is used to build a car along an assembly line. These machines are constantly sending messages (e.g., building process is at this stage, currently constructing this part, etc.). This kind of application would help an organization to monitor exactly how its cars are being built. These messages are extremely critical to the success of the business. If the building process suddenly takes longer than it should, without intervention, production numbers would drop, directly and negatively impacting the organization.

In this case, performance testing is paramount. Testers will have to ensure that the machines can handle any given load and that messages are being delivered and received in real time.

Not only should you test for varying types of data over different devices and networks, but you should also set monitors to carefully track device system statistics (e.g., changes in temperatures, power usage, memory usage, etc.) and measure response times between different network layers.

Most of the IoT protocols use a TCP or UDP transport layer:

Most of these protocols use lower network transport layers. In order to record this traffic, utilize a network sniffing tool like Wireshark. It will allow you to capture any network communication from one location to another. Of course, Wireshark will need to be properly configured to capture only the IoT communication.

Once this traffic is recorded, manually create these messages in your load testing tool that provides support for the different IoT protocols.

It’s only a matter of time before IoT application testing becomes a norm. With a strategic approach to the development and implementation of an IoT testing plan, you will be able to more easily ensure your application’s availability and performance.

Start off by working with your engineers to verify SLAs for the objects in question. Next, determine your peak load by projecting the number of objects you expect to be connected to the server at any one time. Then, in order to generate test cases, define typical and atypical use cases for your objects. Finally, find out if your application is using any unique protocols and choose a testing tool that will allow you to quickly and easily performance-test these protocols.

The Internet of Things doesn’t have to be so daunting. Keep the aforementioned approach in mind when performance testing your applications, and make sure that the IoT implementation has a real impact on your business process. Remember, good performance testing is a matter of good performance design.

This blog was originally published in 2015 and was refreshed in July 2021.

In this session we take you on a journey. The customer journey to...

Event-driven architecture, like Apache Kafka, revolutionizes system...

Download to learn how Tricentis’ Tosca’s no-code capabilities...

Learn how 1,000 of your peers in quality engineering are...

Hear Paul DiGrazia, VP of QE at Wolters Kluwer, share how he...